The Bandwidth of Reality

Part 6 of "The Inference Universe": Why some channels are wide, some narrow, and what happens when we widen them

This is part 6 of the series on Inference. Part 1 was on the Physics of Inference, to wonder at mechanism (waves, fields). In part Part 2, I extend the framework to social systems and society including recognition of connection (networks, cascades). I carry this forward to markets and commerce in Part 3, bringing in more clarity about systems (flows, topology). In Part 4, I use inference as the lens to look at our “set”, on intimacy, self-knowledge, self-awareness and touch upon AI. Part 5 covers what happens we have to interact and live with systems that can infer faster than us.

The series is a formalisation of my world view and mental model and sense of interconnectedness. Hence it zooms from fundamental physics → human collectives → economic systems → individual consciousness → cosmic future → Reality as we perceive it → symmetry in nature and physics. Each part uses the same vocabulary (priors, posteriors, channels, updates) but applies it to a new domain, building unto my cumulative understanding..

In 1948, a mathematician at Bell Labs published a paper that would quietly reshape the world.

Claude Shannon wasn’t trying to explain the universe. He was trying to figure out how many telephone calls could fit on a wire. But in answering that question, he discovered something deeper: a fundamental law governing how much information can flow through any channel; electrical, acoustic, biological, social, economic.

The law is simple. And its implications are vast.

Throughout this series from part 1, we’ve described the universe as an inference machine: state changes propagating through channels, beliefs updating on evidence, predictions correcting against reality. But we’ve left a question unanswered: why do some inferences propagate instantly while others take generations or even eons?

The answer is bandwidth. Or to term it better: Exchange Capacity.

What it is: is the width of the pipe, that’s transmitting the data. This is the quantitative backbone beneath everything we’ve discussed. Physics, societies, markets, minds; they all run inference. But they run it at different speeds, through pipes (channels) of different widths, against noise of different intensities. The capacity of the channel determines what’s possible, and how much is possible.

Let’s look at the limits of this channel.

Shannon’s Gift

The Channel Capacity Theorem

Claude Shannon proved something remarkable:

for any communication channel, there exists a maximum rate at which information can be transmitted reliably.

The formula:

C = B × log₂(1 + S/N)Where:

C = Channel capacity (bits per second)

B = Bandwidth (the range of frequencies the channel can carry)

S/N = Signal-to-noise ratio (how much signal versus how much noise)

This isn’t an approximation. It’s a limit of nature; as fundamental as the speed of light. No clever engineering can exceed it. You can approach it asymptotically with better encoding, but you cannot ever surpass it.

Channel capacity is the maximum rate at which information can be reliably transmitted through a channel, given its bandwidth and noise characteristics.

Why This Matters for Everything

Shannon derived this for telephone wires. But turns out, the structure is universal.

Any system that transmits information has:

A bandwidth: the range of “frequencies” or distinctions it can carry

A noise floor: interference, errors, ambiguity that corrupt the signal

A capacity: the maximum reliable throughput, given the above two factors

This applies to:

Electromagnetic waves through space

Sound waves through air

Neural signals through axons

Words through conversation

Prices through markets

Laws through institutions

Genes through generations

Each of the above is a channel. Each has a capacity of its own. The capacity shapes what can flow through it/them.

The Hierarchy of Bandwidths

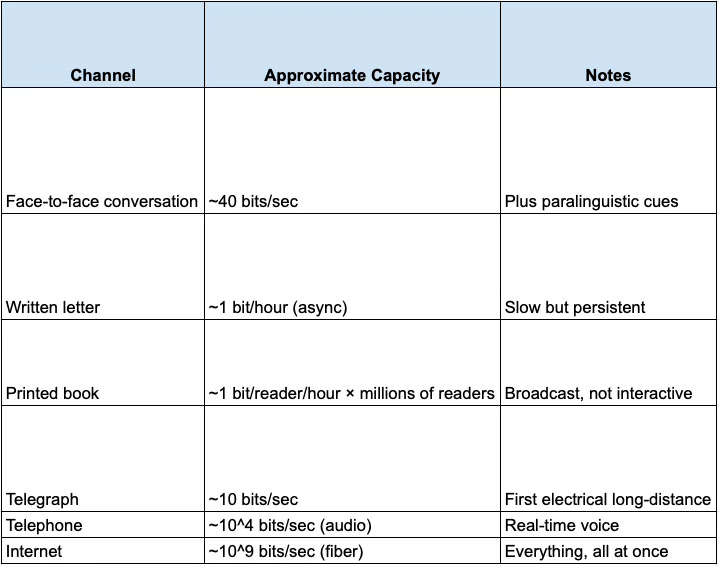

Let’s map the exchange capacities across the domains we’ve covered from posts in part 1 to part 5.

Physical Channels

Light is the universe’s widest commonly-available channel. This is why fiber optics revolutionised communication, why we see with eyes (photons) not ears, why physicists today know more about distant galaxies out there than deep oceans right here on earth.

Biological Channels

Here’s the striking fact: your sensory systems intake millions of bits per second, but your conscious bandwidth is roughly 40 bits per second. Everything else is processed below awareness; predicted, filtered, compressed and only the errors bubble up.

This 40-bit bottleneck is you. It’s what you can attend to, deliberate on, choose between. Everything humans have ever built: language, writing, institutions, technology; is either flowing through this bottleneck or trying to widen it.

Social Channels

The history of civilisation as we know it is substantially the history of widening these above channels.

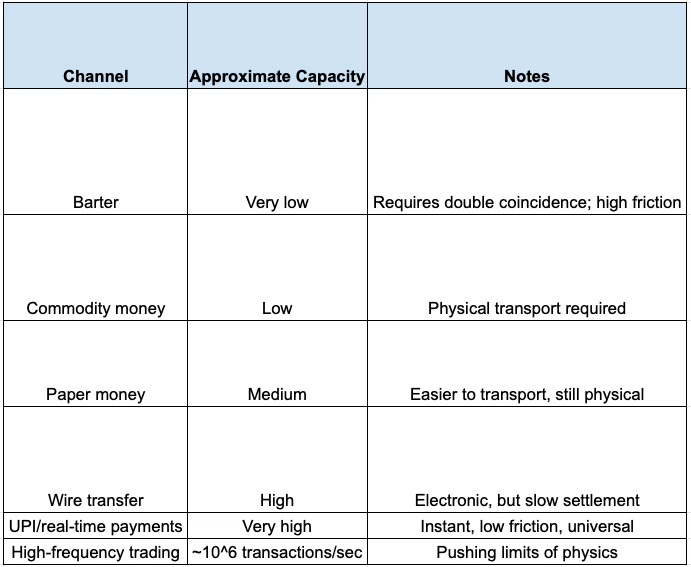

Economic Channels

Money’s evolution is purely bandwidth expansion. Each step of our financial evolution was designed to enable reduced friction, increased throughput, and this lowered the noise (counterfeiting, default risk, settlement failure).

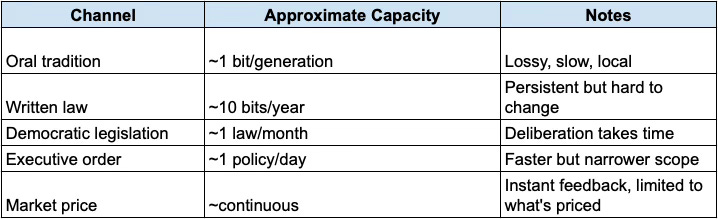

Institutional Channels

Institutions are slow channels by design. Constitutions have low bandwidth because we want them to be hard to change. The stickiness is a feature: it prevents noise (temporary majorities, panics) from corrupting the signal (stable rights, foundational rules).

The Capacity Equation for Any Domain

Let’s generalize Shannon’s formula beyond telecommunications.

For any exchange system:

Effective Capacity = (Distinctions per unit time) × log₂(1 + Trust/Noise)Where:

Distinctions per unit time: How many different states can be transmitted (vocabulary size × speed)

Trust: The degree to which the receiver believes the signal reflects reality

Noise: Interference, deception, ambiguity, error

Examples

Language capacity:

Distinctions: ~50,000 words × ~3 words/second = 150,000 distinctions/sec possible

But trust varies (do I believe you?) and noise is high (ambiguity, mishearing)

Effective capacity: ~40 bits/sec when communication succeeds

Market price capacity:

Distinctions: continuous price levels

Trust: depends on market integrity, liquidity, regulation

Noise: manipulation, insider trading, random fluctuation

Effective capacity: very high in liquid markets, very low in manipulated ones

Institutional capacity:

Distinctions: limited (laws are binary: permitted/forbidden)

Trust: depends on enforcement, legitimacy

Noise: corruption, selective enforcement, ambiguity

Effective capacity: often very low; laws change slowly, and compliance is uncertain

The Trust Multiplier

Notice that the concept of trust appears where Shannon had signal power. This is intentional. What does this really translate to though?

In physical channels, signal power is literal: how loud is the transmission?

In social and economic channels, trust is the functional equivalent of being loud. A message from a trusted source carries more information than the same message from an untrusted source; because you willingly update more on it. Trust has low noise and reduces friction.

Low trust = high noise. If you don’t believe the sender, every message is ambiguous. The channel capacity collapses.

This is why trust is so valuable. It’s not just a nice social quality to have. It’s bandwidth. High-trust societies can coordinate faster because their channels carry more information per interaction.

Bottlenecks as Power

Here’s the structural insight from all this: whoever controls the narrow point in a channel controls the flow.

The Attention Economy

Human conscious bandwidth is ~40 bits/sec. The internet produces ~10^18 bits/day. The ratio is absurd.

This creates scarcity: not of information, but of attention. And scarcity creates value.

The business model of the modern internet is: capture attention, then sell access to it. Google, Facebook, TikTok - they’re not information companies. They’re bottleneck companies. They sit at the narrow point (your 40 bits/sec) and charge tolls for access.

In inference terms: they control which prediction errors reach your model. They shape what you update on. This is power.

Liquidity Provision

In markets, the bottleneck is liquidity : the ability to exchange without moving the price.

Market makers sit at this bottleneck. They offer to buy and sell at quoted prices, providing the capacity for others to trade. In exchange, they capture the bid-ask spread.

High-frequency traders do the same at microsecond timescales. They’re not predicting where prices will go. They’re providing capacity: smoothing the flow of exchange; and extracting a fee for the service.

This is why liquidity crises are so dangerous. When the bottleneck providers withdraw, the channel capacity collapses. Prices gap drastically. Markets seize this. The inference network stops propagating and everything falls.

Network Hubs

In any network, some nodes have more connections than others. These hubs are bottlenecks: information flowing through the network disproportionately flows through them.

Social media influencers are hubs. So are news organizations, central banks, and API providers. They don’t create all the information; they route it. And routing power is control.

In inference terms: hubs determine whose updates propagate widely. A belief that enters a hub spreads. A belief that doesn’t, dies locally.

The General Pattern

If you want to understand where power concentrates, find the bottleneck. If you want to redistribute power, widen the channel or create alternatives.

Civilization as Bandwidth Expansion

Now let’s look at our human history through this lens.

The Major Transitions

Each major civilizational transition corresponds to a bandwidth expansion:

1. Language (~100,000 years ago)

Before: Gesture, vocalization - very low bandwidth

After: Symbolic speech- ~40 bits/sec, but with unlimited vocabulary

Effect: Complex coordination became possible; culture can accumulate and propagate.

2. Writing (~5,000 years ago)

Before: Information limited by living memory

After: Information persists across generations; broadcast possible

Effect: Law, history, literature, accumulated knowledge all came into being.

3. Printing (~500 years ago)

Before: Books copied by hand; expensive, rare, error-prone

After: Mass production of identical copies

Effect: Reformation movement was born, Scientific Revolution got unlocked, and mass literacy was possible.

4. Telegraph/Telephone (~150 years ago)

Before: Information travels at speed of physical transport

After: Instant communication across distances

Effect: Global markets grew massive, coordinated time zones enabled better commerce, news cycles started become in-sync

5. Internet (~30 years ago)

Before: Broadcast media (few to many) or point-to-point (one to one)

After: Many-to-many, instant, global, multimedia, UGC (User Generated Content)

Effect: Everything changed.

6. Mobile payments/UPI (~10 years ago)

Before: Cash or slow electronic transfer

After: Instant, universal, nearly free value transfer

Effect: Financial inclusion, new business models, reduced friction for transaction and commerce.

Each transition didn’t just make existing activities faster. It made new activities possible. When you 10x or 100x channel capacity, you don’t get more of the same; you get phase transitions.

The Pattern

The pattern is simple:

A bottleneck constrains exchange

Technology widens the channel

New forms of coordination become possible

Power structures shift (often violently)

Society reorganizes around the new capacity

A new bottleneck becomes binding

We’re always hitting limits. The question is which limit will we hit next, and when it will break?

The AI Discontinuity

Now let’s look forward.

The Human Bottleneck

Throughout history, the human brain has been the central bottleneck. Every inference ultimately had to flow through human minds:

Scientists had to understand their discoveries

Leaders had to decide on policies

Engineers had to design solutions

Artists had to imagine creations

The ~40 bits/sec conscious bandwidth was the limiting constraint on civilizational throughput. AI changes this.

What Happens When the Bottleneck Bypasses Us?

If AI can:

Read and synthesize faster than humans (already true)

Generate coherent text and code faster than humans (already true)

Make predictions in complex domains better than humans (increasingly true)

Design systems and solutions without human comprehension (emerging)

Then the human bottleneck is no longer the binding constraint on certain types of inference.

This is not about AI being “smarter.” It’s about bandwidth. An AI can process 10^9 tokens while a human processes 10^2.

The throughput difference is seven orders of magnitude.

The Implication

When throughput increases by 10^7x, you don’t get incremental change. You get phase transitions we can’t anticipate.

Consider: if you could suddenly read 10 million books per second, what would you do with that capacity? The question almost doesn’t make sense; the capability is so far outside human experience that we can’t plan for it.

This is the real AI discontinuity: not a faster horse, but the invention of the automobile. Not more scribes, but the printing press. The capacity leap is large enough that qualitative change will surely follow.

The Alignment Problem, Restated (Again)

In Part 5, I framed alignment as prior-sharing. Here’s the bandwidth framing:

The alignment problem is the challenge of maintaining meaningful human oversight when AI inference throughput exceeds human comprehension bandwidth by orders of magnitude.

If an AI can explore 10^9 possible plans while a human can evaluate 10^2, how do we ensure the selected plan is one we’d endorse?

We can’t check all of them. We can’t even check a representative sample. We’re trusting the AI’s compression of the option space; its summary of what matters.

This is the bandwidth problem: we need to maintain alignment through a channel (human understanding) that’s vastly narrower than the process we’re trying to align.

Possible Responses

1. Interpretability: Make AI reasoning transparent enough that we can audit it at our bandwidth. (Hard; AI internals may not compress into human concepts.)

2. Delegation hierarchies: Use AI to monitor AI, with humans only checking top-level summaries. (Risky; who watches the watchers?)

3. Constrained domains: Limit AI to domains where we can verify outputs, not processes. (Limiting; doesn’t scale to complex decisions.)

4. Value learning: Have AI infer what we want from our behavior, so alignment is built-in. (Dangerous; our revealed preferences aren’t our reflective preferences.)

5. Slow takeoff: Maintain human-speed integration as AI capabilities grow, allowing gradual trust-building. (Hopeful; but may not be possible.)

None of these are fully satisfactory. The bandwidth mismatch is structural. It won’t be solved by current cleverness alone.

Designing for Capacity

Let’s bring this back to practical application.

If you understand exchange capacity, you have a design heuristic:

When building systems, always ask: what’s the bottleneck, and how do I widen it?

The Questions to Ask

1. What channel is being used?

What’s the medium? (Language, price, code, image, touch)

What’s the bandwidth? (How much can flow per unit time?)

What’s the noise? (What corrupts or distorts the signal?)

2. Where’s the bottleneck?

In a conversation: is it speaking speed, comprehension, or trust?

In a market: is it liquidity, settlement speed, or information asymmetry?

In an organization: is it decision-making, communication, or execution?

3. How can capacity be increased?

Reduce noise (increase signal quality, build trust)

Widen bandwidth (new medium, better encoding, parallel channels)

Remove intermediaries (direct connection, disintermediation)

Compress better (higher-information representations)

Examples

AI powering Sales calls (conversation intelligence):

Channel: Sales calls (speech)

Bottleneck: Managers can’t listen to every call; sales reps forget key moments

Solution: AI extracts signal from noise, compresses hours into minutes

Capacity increase: Manager effective bandwidth goes from ~10 calls/week to patterns across thousands

UPI:

Channel: Value transfer

Bottleneck: Cash handling, bank visits, settlement delay

Solution: Instant mobile transfer via phone number

Capacity increase: Transaction friction → near zero; financial inclusion explodes

OrbitCover (parametric insurance):

Channel: Risk transfer

Bottleneck: Claims processing (slow, adversarial, high friction)

Solution: Parametric triggers; if flight delayed >2 hours, pay automatically

Capacity increase: Verification bandwidth from weeks (claims adjustment) to seconds (data check)

The Meta-Pattern

In each case, value creation comes from identifying a bandwidth constraint and engineering around it. This is what technology does. It widens channels. It increases exchange capacity. It lets more inference flow across the system.

The Limit of Limits

Let me end with a reflection.

We’ve mapped a hierarchy of bandwidths, from the speed of light to the 40 bits/sec of human consciousness. We’ve seen how bottlenecks create power, how civilizations advance by widening channels, and how AI may soon bypass the human bottleneck entirely.

But there’s a deeper limit we haven’t named, yet.

The Thermodynamic Floor

Shannon’s theorem tells you the maximum capacity for a given signal and noise level. But it doesn’t tell you the cost. Landauer’s principle does:

erasing one bit requires at least kT ln(2) energy. Computation is not free. Information is physical.

As we build systems of ever-greater capacity: AI data centers consuming gigawatts, global communication networks spanning the planet; we’re drawing down the universe’s free energy budget.

This isn’t imminent doom. The sun provides 10^17 watts; humanity uses 10^13. We have headroom. But the headroom is finite.

The Question Beneath the Question

Exchange capacity is about throughput: how much can flow? But also, throughput for what? We can build channels of arbitrary width. We can process information at arbitrary speed. But what should we compute?

This is not a bandwidth question. It’s a values question. And values don’t have a Shannon limit. They’re not optimizable in the same way.

The bandwidth of our reality is vast. We keep widening it. AI will widen it further, perhaps beyond human comprehension. But the question of what to do with that bandwidth: what inferences are worth making, what exchanges are worth having, what states are worth propagating; continue to remain human. At least for now.

Coda: The Narrow and the Wide

Here’s the image I want to leave you with.

The universe has channels of wildly different widths. Light carries 10^15 bits per second. Your consciousness carries 40. The ratio is 10^14; a hundred trillion to one.

And yet -

Those 40 bits are where you live. That narrow channel is where meaning happens, where love forms, where decisions are made. The wide channels carry raw data. The narrow channel carries you.

AI will widen many channels. It will process what we cannot, see what we cannot, move faster than we can follow. But the narrow channel: the human bandwidth where experience becomes meaning; will remain. Not because it’s efficient. Because it’s ours.

The bandwidth of reality keeps expanding. The bandwidth of a human life stays roughly constant. Forty bits per second, for maybe 80 years. A few billion moments of conscious experience.

That’s what me and you have. Not the universe’s throughput. Mine. Yours. Ours.

Use it wisely.

Appendix: Key References

Claude Shannon : “A Mathematical Theory of Communication” (1948). The foundational paper on information theory and channel capacity.

Rolf Landauer : “Irreversibility and Heat Generation in the Computing Process” (1961). The physical limits of computation.

Manfred Zimmermann : “The Nervous System in the Context of Information Theory” (1989). Bandwidth of human sensory and conscious processing.

Tim Wu : “The Attention Merchants” (2016). History of the attention economy and bottleneck control.

Albert-László Barabási : “Linked” (2002). Network science and the power of hubs.

James Gleick : “The Information” (2011). History of information theory and its implications.

César Hidalgo : “Why Information Grows” (2015). Information, networks, and economic development.

Carlota Perez : “Technological Revolutions and Financial Capital” (2002). How technology transitions reshape society.

Nick Szabo : “Shelling Out” (2002). The origins of money as bandwidth expansion for exchange.

Venkatesh Rao : “Breaking Smart” (2015). Software as universal bandwidth expansion.