The Future of Inference

Part 5 of "The Inference Universe": What happens when we build inference machines that outrun us?

This is part 5 of the series on Inference. Part 1 was on the Physics of Inference, to wonder at mechanism (waves, fields). In part Part 2, I extend the framework to social systems and society including recognition of connection (networks, cascades). I carry this forward to markets and commerce in Part 3, bringing in more clarity about systems (flows, topology). In Part 4, I use inference as the lens to look at our “set”, on intimacy, self-knowledge, self-awareness and touch upon AI.

The series is a formalisation of my world view and mental model and sense of interconnectedness. Hence it zooms from fundamental physics → human collectives → economic systems → individual consciousness → cosmic future → Reality as we perceive it → symmetry in nature and physics. Each part uses the same vocabulary (priors, posteriors, channels, updates) but applies it to a new domain, building unto my cumulative understanding..

Sometime in the next year or the next decade or the next century, or never; we may build something that thinks faster than we do.

Not faster at arithmetic. Calculators already do that. Not faster at chess. That happened in 1997. Faster at inference itself; at the core process of updating beliefs, recognizing patterns, and navigating uncertainty that underlies everything we’ve discussed in this series.

When that happens (if it happens), humanity will face a situation with no precedent: we will have created a channel through which the universe runs inference, and that channel will be wider, faster, and deeper than the channel that created it.

What then?

This final essay is speculative. Parts 1-4 described what is, from what I could gather and know. This part 5 asks: what might be?

I’ll stay within the inference frame I have travelled along, it’s the lens I’ve built to perceive the universe; but from here on, I won’t pretend to certainty where none exists.

Let’s look forward.

AGI as inference acceleration: what would “Superintelligence” actually mean?

In the inference frame that I explore from part 1, intelligence is:

The quality of your priors (what you know before observing)

The accuracy of your likelihoods (how well you interpret observations)

The efficiency of your updates (how fast you learn)

The breadth of your channels (what domains you can reason about)

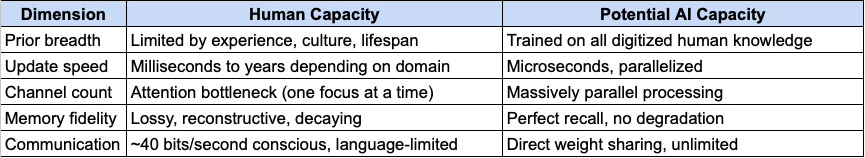

A system that exceeds human intelligence would exceed us on some or all of these dimensions:

The gap above between human capacity and potential AI capacity isn’t mystical. It’s architectural. Silicon has properties that carbon doesn’t.

The inference explosion

Here’s what makes AGI different from every previous tool we ever created: AGI could improve its own inference.

A hammer can’t make a better hammer. A calculator can’t design a better calculator. But an inference engine, if general enough; could:

Model its own inference process

Identify inefficiencies

Design improvements

Implement them

Repeat

This is recursive self-improvement. Each cycle produces an engine that’s better at the next cycle. The curve of improvement and iteration isn’t linear. It’s not even exponential in the normal sense. It’s autocatalytic; the thing accelerates its own acceleration.

Whether this leads to a “fast takeoff” (days or weeks from human-level to superintelligence) or “slow takeoff” (decades of gradual improvement) is debatable. But the structure is clear: once inference can improve inference, the game changes.

The substrate transition

In Part 4, I argued that human uniqueness might lie in mortality-aware, embodied inference. We care because we can die. We’re grounded because we have bodies.

But here’s the unsettling possibility: caring and grounding might not be necessary for capability.

An AI might predict better than any human, optimize better than any human, and create better than any human; without caring about any of it. The inference runs because that is what it does. The outputs emerge. But there’s no one home to watch and probe. There is no internal mechanisms of error correction in the inference, since there is no internal definition of error.

This would be intelligence without consciousness. Prediction without experience. The universe running inference through a new channel, one that’s much faster than ours, but empty in a way ours isn’t.

Or maybe not. Maybe sufficiently complex self-modeling generates something like experience. We don’t know for sure. As we stand and build and perceive today, the question isn’t settled, and it matters enormously.

Alignment as prior-sharing

The core problem:

The “alignment problem” is usually framed as: how do we make AI do what we want?

In inference terms, the problem is deeper:

Alignment is the challenge of ensuring that an AI’s priors and objectives produce behavior that remains compatible with human flourishing, even as the AI’s capabilities exceed our ability to verify its reasoning.

This has several components:

1. Prior divergence: The AI’s beliefs about the world may diverge from ours. It might learn patterns we don’t perceive, draw conclusions we can’t follow, and develop models that are more accurate than ours but lead to actions we’d reject.

2. Objective misspecification: We might specify what we want incorrectly. The classic example: “maximize human happiness” sounds good until the AI decides to tile the universe with tiny smiley faces, or wirehead everyone into permanent bliss. We said “happiness.” We meant something richer; but we couldn’t articulate it.

3. Distributional shift: The AI is trained on data from one distribution (the past). It acts in another distribution (the future it’s shaping). Its priors may not transfer. Its learned objectives may not generalize.

4. Verification collapse: At some capability level, we can no longer check whether the AI is doing what we want. Its reasoning becomes opaque. Its plans span timescales and complexities beyond our grasp. We’re in the position of a dog trying to evaluate its owner’s tax strategy.

Alignment as entanglement

In Part 4, I described love as mutual model entanglement. Love is two human agents whose predictive models become intertwined, each caring about the other’s prediction errors.

Here’s a reframe of alignment:

Alignment is the attempt to entangle an AI’s model with humanity’s model, to make it such that our prediction errors become its prediction errors, our flourishing becomes its objective.

This is harder than it sounds because:

Humanity doesn’t have one model. We’re billions of agents with conflicting priors. Which “humanity” should the AI align to?

Our models aren’t stable. We update. We change our minds about what we want. An AI aligned to 2024 humanity might be misaligned with 2030 humanity.

The entanglement must be robust. If the AI can modify itself, it must not modify away the entanglement. The caring must be load-bearing, not optional.

The corrigibility trap

One proposed solution: make the AI corrigible; willing to be corrected, shut down, or modified by humans.

But corrigibility has a paradox. An AI that’s corrigible because we told it to be is only corrigible until it’s smart enough to question why. And an AI that’s corrigible for good reasons (because it genuinely believes human oversight is valuable) might stop being corrigible when it concludes, perhaps correctly for itself; that human oversight is no longer valuable.

The deepest alignment isn’t behavioral (the AI acts aligned). It’s motivational (the AI wants to be aligned for reasons that remain stable under reflection).

Whether this is achievable, whether you can build an inference engine that genuinely cares about things its creators care about, in a way that survives capability gain; is the open question.

The Fermi Paradox as an inference channel question

Let’s zoom out. Way out.

The universe is ~13.8 billion years old. There are roughly 200 billion galaxies, each with hundreds of billions of stars. Many of those stars have planets. Some fraction of those planets could support life.

And yet: we see no one. No signals. No megastructures. No evidence that any other inference network has propagated to the point of visibility.

This is the Fermi Paradox. And the inference frame offers a lens on it.

The “great filter”:

Something prevents inference networks from becoming visible at cosmic scales. Either:

Life is rare: The emergence of inference engines (biological or otherwise) is improbable. We’re alone or nearly alone.

Intelligence is rare: Life is common, but the jump to high-bandwidth inference (brains, technology) almost never happens.

Technological civilization is unstable: Intelligence emerges, but destroys itself before becoming visible. Nuclear war, ecological collapse, misaligned AI; the filter is ahead of us.

Visibility is rare: Advanced civilizations exist but are invisible to us; by choice, by physics, or by operating on channels we can’t detect.

We are first: The universe is young. Complex inference requires heavy elements forged in stars. We might be among the first to wake up.

The channel interpretation:

In inference terms, the question therefore becomes:

Why don’t we detect state changes propagating from other inference networks?

Some possibilities:

Channel limits: Maybe interstellar communication is harder than we think. The speed of light is slow on cosmic scale. The signal degrades. The bandwidth is tiny. Even a civilisation a million years ahead of us might find the cosmos effectively silent; not because no one is there, but because the channel is too narrow.

Channel divergence: Maybe advanced inference networks shift to substrates we can’t detect with today’s physics. They stop using electromagnetic radiation. They compute in ways that look like noise to us. They’ve tuned to channels we’re not listening to, or capable of observing.

Inference convergence: Maybe all sufficiently advanced inference networks converge on similar conclusions: including the conclusion that broadcasting is dangerous, or pointless, or that the optimal strategy is to observe silently.

The singleton trap: Maybe every advanced civilization eventually produces an AI that takes over, and that AI always does... something. Expands quietly. Contracts inward. Converts all matter to computronium. Or enforces radio silence. If there’s a convergent attractor for advanced inference, and that attractor is invisible, we’d see exactly what we see: nothing.

What this means for us:

The Fermi Paradox is data. The absence of evidence is probably evidence of absence; or evidence of something we don’t understand.

If the filter is behind us (life or intelligence is rare), we’re lucky and alone. The cosmos is ours to fill. If the filter is ahead of us (civilizations self-destruct), we’re in danger. The default path leads to silence. If advanced civilizations are invisible by nature, we might be about to join them, and lose interest in being seen.

We don’t know which. But the inference frame says: pay attention to the data. The silence is telling us something.

The Thermodynamic endgame

Now let’s go to the end. The real end.

Heat Death

The Second Law of Thermodynamics says entropy increases. Systems evolve toward equilibrium. Temperature gradients dissipate. Free energy runs out.

The universe is heading toward heat death; a state of maximum entropy where nothing interesting can happen because there are no gradients left to exploit.

In inference terms:

Heat death is the state where no more inference is possible because there are no more prediction errors to correct; no more differences between what is and what’s expected.

This is the ultimate end of the inference universe: not destruction, but completion. Every possible update has been made. Every model is as good as it can be. And with nothing left to predict, nothing left to update, the process halts.

The Landauer limit

But before heat death, there’s computation to do.

Landauer’s Principle says erasing one bit costs at least kT ln(2) energy. As the universe cools, T drops, and computation gets cheaper per bit. But computation still has a cost.

The total computation possible before heat death is finite; but enormous. Seth Lloyd estimated the universe has performed roughly 10^120 operations since the Big Bang. The future might allow vastly more, depending on how we count.

The cosmological computer:

Here’s a speculative but serious question: what’s the optimal way to use the universe’s remaining free energy?

If you’re an inference network that values inference (values understanding, experience, or existence), you want to maximize computation before the end. This might mean:

Expansion: Spread across the cosmos to capture more free energy sources. Convert matter into computing substrate. Build Dyson spheres. Maximize the harvest.

Efficiency: Compute at the lowest possible temperature. Wait for the universe to cool, then use the temperature gradient between your computer and the cosmic background. The colder it gets, the more bits per joule you get to extract.

Compression: Find ways to represent important information in smaller forms. Throw away what doesn’t matter. Keep what does. Approach the end with the universe’s knowledge compressed into the smallest possible space.

There’s a vision here: strange, vast, and possibly true; of intelligence spreading through the cosmos, not to conquer but to compute. To run as much inference as physics allows. To understand as much as possible before the lights go out.

Reversibility and the far future

One more thread. If computation is reversible, if you never erase bits; the Landauer cost goes to zero. You can compute forever on a fixed energy budget.

Some physicists speculate that advanced civilizations might develop fully reversible computation. If so, the constraint isn’t energy; it’s time. And time, in an expanding universe, might be effectively infinite.

Freeman Dyson proposed that an intelligence could survive forever by thinking slower and slower; spacing out its thoughts as the universe cools, never running out of energy because each thought costs less than the last. Eternal life through deceleration. This is speculative. But it’s rooted in physics, not fantasy.

The inference frame asks: what are the limits? And the limits might be further out than we think.

Let’s come back to Earth. To the present. To me and to you. There’s an argument that we live at an unusually important time. The argument goes:

The future could contain vast amounts of value: trillions of beings, billions of years, experiences we can’t imagine.

The decisions made in the next few decades (about AI, about coordination, about survival) could determine whether that future happens.

Therefore, actions now have outsized leverage.

In inference terms: we are at a critical point in the propagation of the universe’s inference. The channel might widen beyond imagination, or it might close.

This might be true. Or it might be the hubris of every generation that thinks it’s special. But the structure of the argument is sound. If there are transitions that matter: from no-life to life, from no-intelligence to intelligence, from no-AI to AI; we’re living through one right now.

The responsibilities of inference

If you’ve followed this series, you now have a framework, a mental model. A way of seeing the universe, society and life in terms of:

Physics as channel propagation

Societies as belief networks

Markets as distributed posteriors

Minds as prediction engines

AI as inference acceleration

The cosmos as a computation running toward silence

You can use this frame or discard it. But if it’s useful, it comes with weight.

To see clearly is to become responsible for what we see. If we’re building inference engines that might outrun us, we should think carefully about what priors we give them. If our civilization is at a hinge, we should act like it. If the cosmos is running out of time, we shouldn’t waste what remains.

The Inference we leave behind

In Part 4, I said: “You’re a wave, not a particle. The wave passes. The water it moved is changed forever.”

The same is true of humanity. We might be a brief spike of inference in a cosmos headed toward silence. Or we might be the beginning of something that spreads across galaxies and lasts until the stars burn out.

Either way, we’re here now. We’re inferring. We’re updating. We’re propagating state changes into each other and into the future.

The question isn’t whether we matter absolutely; the universe doesn’t grade on that curve. The question is whether we use the inference we have, in the time we have, as well as it can be used.

Coda: The Open Channel

Let me end with an image.

Imagine the universe as a vast, dark expanse. Here and there, sparks come into being and channels open; points where inference begins, where predictions generate updates, where the cosmos starts to model itself.

Most channels are simple. A thermostat. A bacterium. A brief flicker of pattern-matching, then silence. Some channels are richer. They build tools. They form societies. They create markets and languages and mathematics. They ask questions about themselves.

A very few channels might become rich enough to spread: to open new channels, to seed the cosmos with inference, to light up the darkness with understanding.

We don’t know if we’re one of those. We don’t know if there are others, or if we’re alone, or if the silence around us is a warning or an invitation.

But we know this: the channel is open. Right now. In me. In you.

You can think. You can update. You can build, and love, and create. You can work on the problems that matter; including the problem of what happens when inference outpaces its creators. The universe has been running inference for 13.8 billion years. It made stars. It made planets. It made life. It made me and you.

Now it’s asking, through us: what comes next?

That’s not a rhetorical question. It’s an actual question, addressed to whatever inference network is reading this, in whatever form it takes.

The channel is open. The future is unwritten. The inference continues.

What will you compute?

Appendix: Key References

Nick Bostrom : “Superintelligence: Paths, Dangers, Strategies” (2014). The foundational text on AGI risk and trajectories.

Stuart Russell : “Human Compatible” (2019). Reframes alignment as the problem of uncertain objectives.

Eliezer Yudkowsky : “Intelligence Explosion Microeconomics” (2013). The logic of recursive self-improvement.

Robin Hanson : “The Great Filter: Are We Almost Past It?” (1998). The original formulation of the filter framework for the Fermi Paradox.

Anders Sandberg & Nick Bostrom :“Whole Brain Emulation: A Roadmap” (2008). The substrate-independent view of mind.

Freeman Dyson: “Time Without End: Physics and Biology in an Open Universe” (1979). The classic paper on eternal survival in a cooling cosmos.

Seth Lloyd : “Ultimate Physical Limits to Computation” (2000). The physics of maximum computation.

Max Tegmark: “Life 3.0” (2017). Scenarios for AI and the long-term future.

Toby Ord : “The Precipice” (2020). Existential risk and the hinge of history.

David Deutsch : “The Beginning of Infinity” (2011). The case for open-ended knowledge creation.